Any business wants software to work for it — not the other way around. AI systems are no exception. Large language models (LLMs) are powerful, but they’re not cheap. Many companies jump into using a basic LLM, only to realize later it’s burning through their budget faster than expected. What initially appeared simple turned out to be a costly surprise.

So, how to reduce LLM costs without giving up on AI altogether? That’s exactly what we’re here to explore.

I’m Andrii Faschlin, ML Engineer at Uptech. In this post, I’ll walk you through the key factors that drive LLM costs, the strategies we use to bring them down, how LLMOps fits into the picture, and how Uptech can help you build a cost-effective AI product, even on a tight budget.

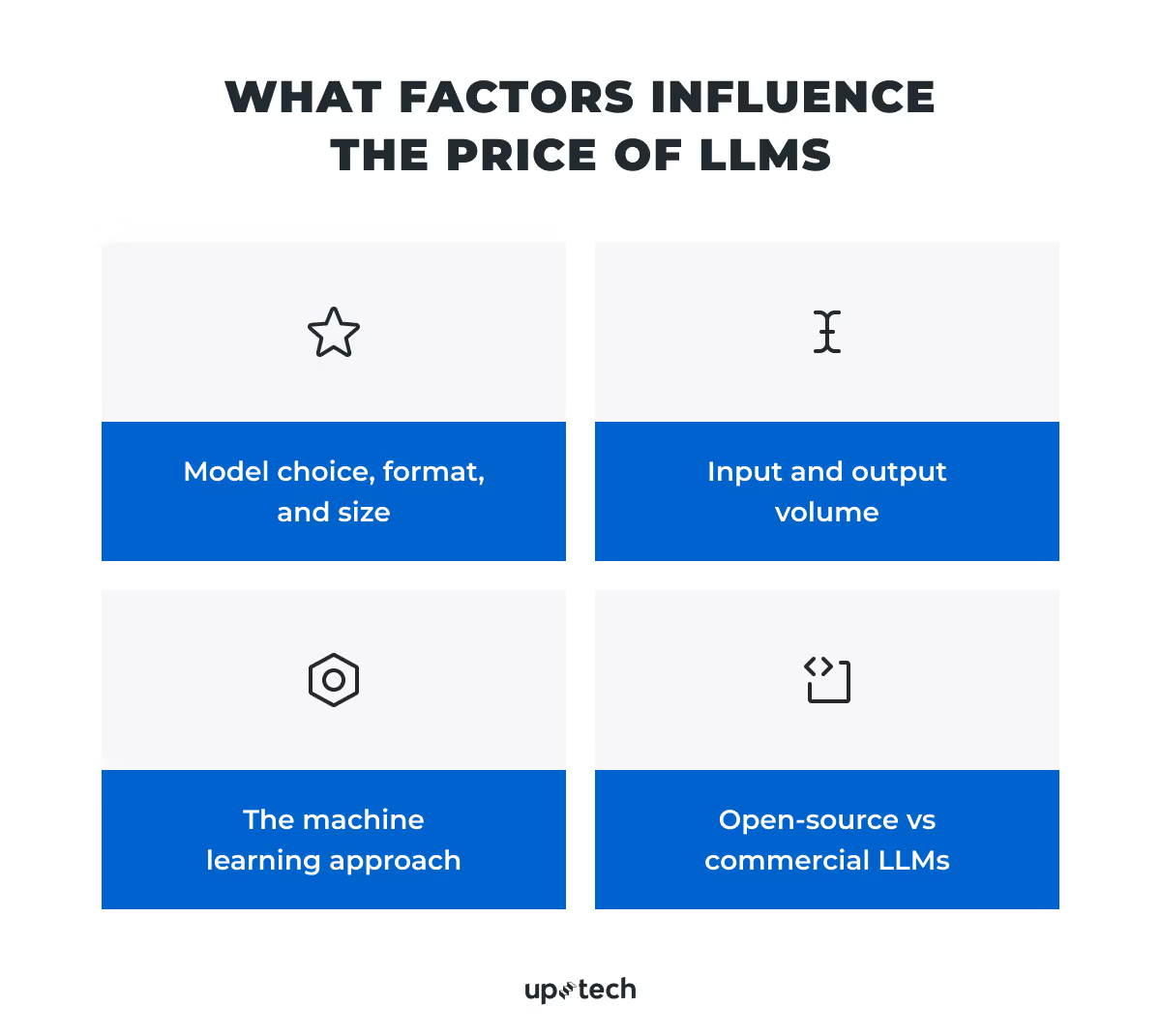

LLM Cost Optimization: Key Factors that Influence Price

Before we get into how to reduce large language models’ costs, we need to understand what drives them. With this knowledge, cost-saving decisions become much easier.

From my experience, there are four key factors that shape the price of working with large language models. Let’s take them one by one.

Factor 1. Model choice, format, and size

Not all LLMs cost the same, not even close. Different models come with different pricing structures based on their architecture, capabilities, and supported modalities.

Model size

Size usually refers to the number of parameters. The latter are the internal values the model uses to process and generate information. A model like GPT-4 has hundreds of billions of parameters. More parameters often mean better reasoning and fluency, but also drive up compute requirements and, as a result, the price.

Smaller models (like Mistral 7B or LLaMA 13B) use fewer parameters and cost far less to run. They often perform well on focused tasks, so if your use case doesn’t require deep analysis or creative reasoning, starting small makes sense.

Model format

Some models are text-only. Others are multimodal and support text, images, code, or audio. Multimodal LLMs usually cost more.

On the other hand, computer vision tasks, like an AI medical system that processes MRIs and CT Scans, require models that support image inputs. These models are more demanding in terms of compute and memory, which often leads to higher costs.

Cost difference example

Just by looking at this, you can see the cost difference is massive. GPT-3.5 Pro is over 150x more expensive than Mistral 7B in terms of output pricing. In simple terms, GPT-3.5 Pro might generate a paragraph, while Mistral could give you several responses for the same cost.

Your choice should depend on your use case. Some models produce highly detailed and accurate answers, but if a smaller model solves the task just as well, that’s already a cost reduction.

Factor 2. Input and output volume

The second major factor is the amount of data the model processes: both incoming (input) and outgoing (output). That’s where tokens come in.

What are tokens?

Tokens are chunks of text the model uses to read and generate language. One token equals about 4 characters of English text or 0.75% of a word. So a sentence like “AI chatbot is helpful” breaks down into 4 tokens.

LLM pricing is usually based on token usage. You pay for:

- Input tokens — the prompt you send

- Output tokens — the model’s reply

The more text you pass in or request back, the more tokens you consume, and the higher the cost. Long prompts and detailed outputs can burn through your token limit quickly. If you're working at scale, this adds up fast.

Tools like OpenAI’s tokenizer can help estimate how many tokens your data will use with a given model. This makes early cost planning easier.

If your model processes visual data (like images or PDFs), it still uses tokens, but these are not text-based. Instead, the data is converted into token-like representations, such as image patches or embeddings. These also count toward your usage and can significantly affect cost, depending on file size and model type.

At Uptech, we often advise clients to cut unnecessary prompt fluff, shorten outputs where possible, and structure queries to use fewer tokens. In some cases, you may even avoid LLMs entirely by using lightweight alternatives like decision trees when it makes sense.

Factor 3. The machine learning approach

The way you use the model also affects the cost. There are three main approaches:

- Zero-shot. You use a pre-trained model to generate predictions right away. This works best for basic use cases where the task is clear and doesn't need context. But if your prompts require lots of examples to work well, this can get expensive fast.

- Few-shot. You include a few labeled examples in the prompt. This helps with slightly more complex tasks, like sentiment classification. It improves results but also adds more tokens per request.

- Fine-tuning. You train the model on your own labeled dataset. This requires an upfront investment and may increase the per-token rate slightly. But once trained, the model doesn’t need lengthy few-shot prompts to perform well. This reduces token usage and can make requests more cost-effective in the long run.

The best thing to do is to run experiments and compare these options. What looks cheap at first might become expensive at scale, and vice versa.

Factor 4. Open-source vs commercial LLMs

Another key factor behind LLM cost is whether you choose an open-source or commercial model.

- Open-source LLMs (like LLaMA or Mistral) are free to use and modify. They’re transparent, community-driven, and evolving fast. But they may require more engineering effort to deploy and maintain.

- Commercial LLMs (like GPT-5 or Claude) offer out-of-the-box performance, support, and infrastructure, but come with higher usage fees, and any customization will drive extra costs.

In most scenarios, if your team can handle setup and tuning, open-source options can significantly reduce LLM costs. But for mission-critical tasks or fast go-to-market, commercial models might still be worth the investment.

Now that we have dealt with the factors that impact the costs of LLMs, we can move on to the strategies that can help reduce the pricing tag.

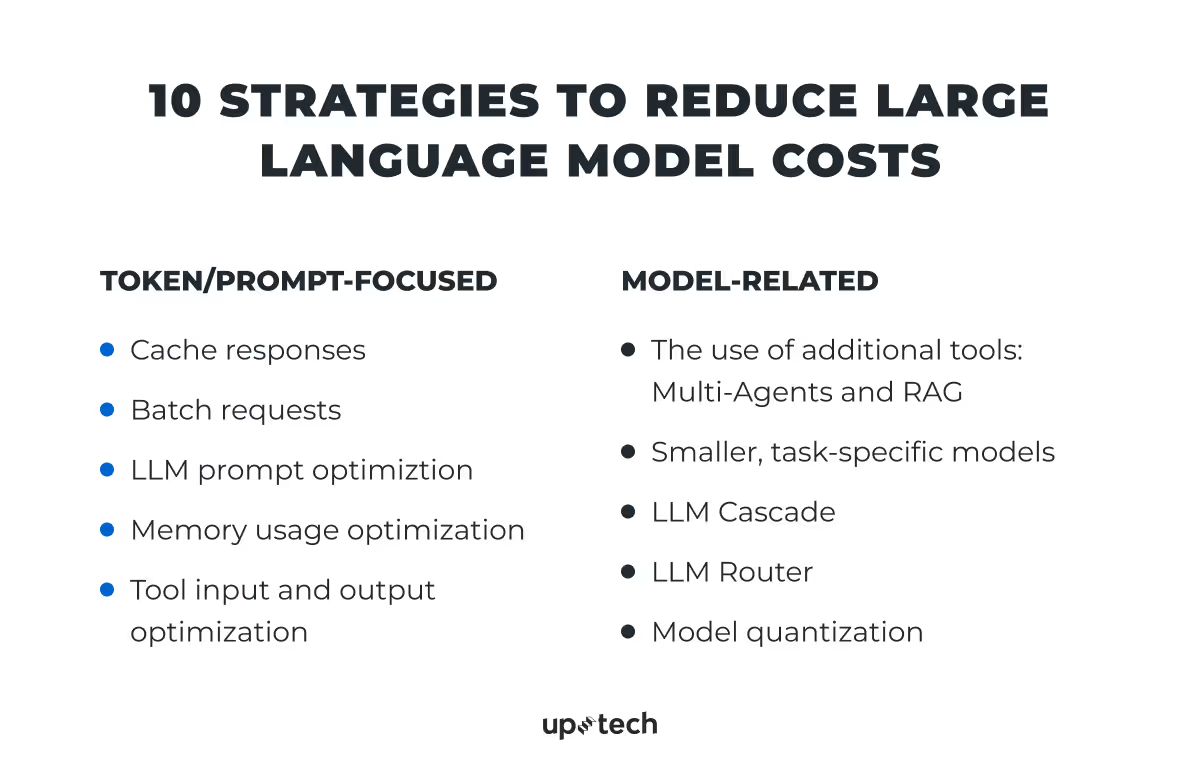

10 Strategies to Reduce Large Language Model Costs

At the high level, there are 2 main approaches that can help you reduce the LLM costs: one approach limits the amount of data the model processes, and the other adjusts the model setup. The first focuses on tokens and prompts (smaller inputs, shorter outputs, and reused responses). The second approach relies on model-level adjustments (when you choose more efficient architectures, use smaller models, or integrate external tools).

Since LLMs don’t have fixed costs, it’s important to balance cost and performance. Let’s take a closer look at each strategy as the lever we can pull to reduce LLM costs.

Strategy 1. Use cache responses

One of the simplest ways to cut down the LLM costs is to avoid making the same expensive call twice. What I am talking about is cache responses.

In most AI use cases, users often ask similar, if not identical, questions. If the system already answered something once, there’s no reason to send that request to the model again. Instead, it can return the saved response instantly.

For example, if your app explains internal policies or pricing models, chances are the same questions will keep popping up. It’s possible to store the responses to those prompts, and, in this way, you can cut down on token usage and speed up response times for the user.

Basic caching works with exact matches. But with LLMs, input phrasing often varies, even when the meaning stays the same. That’s why semantic caching works better here.

Semantic caching doesn’t look for the same words; it looks for the same intent. It maps incoming queries to vector embeddings and compares them to past ones in a vector database. If it finds a close match, it reuses the previous answer.

Tools like GPTCache help set this up. You plug in your embedding model and vector store, and the system checks for reusable responses automatically.

If you already pay for every request that hits the model, caching gives you a way to eliminate the need to pay for duplicates.

Strategy 2. Opt for batch requests

Another LLM cost optimization option is to group multiple prompts into a single request instead of sending them one by one.

Each API call comes with overhead. If you send 100 small prompts individually, you pay for each call’s setup, processing, and latency. But when you combine them into a batch, you reduce that overhead while still getting all the answers.

This works best for tasks like:

- Processing large datasets

- Running quality checks

- Pre-generating answers to expected questions

- Generating content variations at once

Many LLM APIs support batch processing by design; you can pass a list of inputs in a single call. That means fewer requests, less overhead, and lower total cost per result.

As the trade-off, batched responses may take slightly longer to return, and debugging errors becomes trickier. But when scale matters, batching can bring significant savings with minimal changes to your workflow.

Strategy 3. Optimize LLM prompts

One of the most overlooked ways to reduce LLM costs is to optimize the prompts you send to the model. Every character in a prompt counts toward your token usage: The longer the prompt, the more expensive the request becomes. Prompt optimization can reduce token usage and bring down the overall cost by up to 35%.

What does prompt optimization look like in practice? We rewrite the text to remove anything unnecessary — parts that don’t affect the model’s ability to produce a good answer. Then we restructure the prompt to make it clearer, so the model understands the task right away and returns a precise response. The clearer the prompt, the fewer tokens the model needs to understand it, and the fewer tokens it needs to generate a high-quality answer.

Here’s a simple example:

Original prompt: "Please summarize the following text for our internal report. It’s important to make the summary clear and concise so our team can quickly understand the key points. Here's the text:"

Optimized prompt: "Summarize the following text in 3 bullet points:"

The optimized prompt is shorter, more direct, and easier for the model to process, but still delivers the same result. Multiply that token difference across thousands of requests, and the savings add up fast.

For more advanced use cases, tools like LLMLingua (developed by Microsoft) take optimization even further. This method removes noise and redundant tokens from prompts automatically. It’s especially useful when your prompts contain large amounts of context or background info.

Prompt optimization is usually done during the model testing phase. We test multiple prompt versions, track their accuracy, and find the one that performs best with the lowest token count. This is a critical step in LLM development and one that we never skip.

Skipping this phase may result in poor model performance, high token consumption, and missed opportunities to integrate useful tools or improvements. When budgets are tight, taking the time to optimize prompts pays off fast.

Strategy 4. Optimize memory usage

Some LLM-based systems rely on memory to keep context between interactions. For example, this can be an AI chatbot that remembers past conversations or user preferences. While this improves relevance, it also increases cost.

Why? Because each time the model replies, the memory must be sent as part of the prompt. The more context you include, the more tokens you pay for.

To reduce that overhead, you need to control what enters the memory and how the system uses it.

Here’s what helps:

- Keep only the parts of the history that affect the current output

- Remove outdated or low-impact data from memory

- Use retrieval systems to fetch specific context instead of passing everything

For instance, instead of sending an entire chat history, we can design a logic that pulls only relevant exchanges based on the user’s current query. This reduces prompt size and doesn’t hurt response quality.

In our experience, memory optimization can lower token usage by 20-40%, especially in multi-turn tools like customer support bots or internal conversational AI assistants.

If your product requires memory, use it carefully.

Strategy 5. Optimize tool input and output

Even if you’ve already optimized your prompt structure, you might still pay for processing too much text, or for getting more output than you actually need. That’s where tool-level input and output optimization steps in.

This strategy trims the data your system sends to the LLM and reduces the length or format of what it asks for in return. The goal is simple: avoid passing in or generating anything that doesn’t directly impact the result.

Let’s break it down.

- Input optimization. If your tool sends entire email threads, long PDFs, or database records into the model, you might be wasting tokens. Instead, it’s possible to filter or pre-process the content before it reaches the model. Keep only the sections that matter.

- Output optimization. You don’t always need a long-form answer. In many use cases, three bullet points or a short summary are enough. Setting limits on the output format, like “respond in one sentence,” helps reduce cost without affecting usability.

This strategy works especially well in:

- LLM-powered summarizers, extractors, translators

- Internal tools that run LLM calls in bulk

- Multi-step pipelines where each step adds tokens

How is this different from prompt optimization?

Prompt optimization focuses on how you phrase the instruction: the choice of the clearest wording that gets the right answer with minimal tokens.

Input/output optimization focuses on what data you send and what type of answer you want back. Even the best-written prompt won’t help if you overload the model with unnecessary context.

Used together, these two strategies give you the best of both worlds: smarter prompts and smoother data flow.

Strategy 6. Opt for additional tools: Multi-agents and RAG

Using additional tools can help reduce LLM costs while maintaining high-quality output. Two helpful options here are multi-agent systems and retrieval-augmented generation (RAG).

Multi-agent systems allow the model to rely on external tools for specific tasks. For example, instead of solving a complex math operation internally, the model can use an existing math library. This approach can lower the LLM’s workload and cost and still deliver accurate results. However, it’s important to test these setups to see how they perform on your specific data.

RAG is useful when you want to build a knowledge base (for example, a set of predefined questions and answers) that your system can access during inference. This makes the development phase slightly more expensive, but in the long run, it helps reduce LLM usage.

For example, if we want to develop a chatbot or an AI assistant that helps with FAQs, RAG becomes a cost-effective solution. The model doesn’t need to search through all available documents or rely on general knowledge. Instead, it refers directly to your own content and provides more accurate responses.

Strategy 7. Choose smaller, task-specific models

We’ve already covered how model size affects cost. But there’s another angle worth highlighting: you don’t always need a general-purpose LLM to solve a specific problem.

Instead of running every query through a large model like GPT-4 or 5, the team can choose smaller models that were trained for a single task, like sentiment analysis, summarization, or classification. These models are often open-source, cheaper to run, and faster in production.

In many cases, they also perform better than general models on narrow tasks. For example, a distilled BERT model fine-tuned for sentiment detection will likely outperform a giant model that tries to do everything at once, and it will do so at a fraction of the cost.

This approach works best when your product focuses on a fixed set of tasks. You evaluate what those tasks are, find (or train) a smaller model that covers them, and integrate it directly into your pipeline.

The result: lower cost, faster response, and fewer infrastructure requirements, all with the same or pretty much the same quality.

Strategy 8. Consider an LLM cascade

Not every task needs a high-end model. One way to reduce the costs of large language models is to build a cascade — a setup where simpler, cheaper models handle most of the work, and only the hardest queries go to a more powerful model.

Here’s how it works: We start with a lightweight model like GPT-3.5, Mistral, or a task-specific model. If that model returns a confident and accurate response, we serve it to the user. If the answer seems uncertain, incomplete, or doesn’t meet a quality threshold, we pass the same request to a stronger model, like GPT-4 or Claude.

So yes, for escalated queries, you pay twice: once for the lightweight model and again for the stronger one. However, this only happens for a small fraction of total requests. The majority are handled by the cheaper model, which significantly reduces average costs overall.

This approach lets us:

- Reduce usage of high-cost models

- Handle the majority of queries with faster, cheaper systems

- Keep quality high where it actually matters

LLM cascades require a bit of logic around fallback conditions. There may be a need to define scoring thresholds, use evaluation models, or implement user feedback signals. But once set up, they help balance cost and performance much more effectively than using a single model for everything.

We’ve used this approach in assistant-like tools, content validation workflows, and data processing pipelines, and in every case, it lowered the average cost per request.

Strategy 9. Utilize an LLM router

Another way to improve the cost-effectiveness of LLMs is to route each task to the most suitable model instead of sending everything to a general-purpose one. This approach is called LLM routing.

An LLM router automatically chooses the right model based on the task type. For example, it can send complex math problems to a specialized model like MathGPT, creative writing tasks to a model fine-tuned for content generation, and short classifications to a lightweight model like DistilBERT. This avoids using expensive, overpowered models where they’re not needed.

Unlike a cascade, where one model tries first and others act as backups, routing happens upfront. The system decides which model to use before the prompt is sent. This reduces processing time, improves output relevance, and lowers token usage.

There are ready-made solutions for this, including Neutrino AI router, which automatically assigns tasks to the right model based on intent detection and routing logic.

This setup works well in systems that handle mixed task types, like AI copilots, assistants, or platforms that support both analytical and creative workflows.

Strategy 10. Quantize the model

One more strategy to optimize LLM costs is to quantize the model. That is, reduce its numerical precision to make it smaller and faster, without sacrificing much in performance.

LLMs usually run on 16-bit or 32-bit floating-point weights. Quantization converts those weights into lower-precision formats, like 8-bit or 4-bit integers. This makes the model lighter, faster to run, and less memory-hungry, which means lower LLM costs across both training and inference.

What’s more, many models can operate with minimal accuracy loss after quantization, especially when the task doesn’t require extremely nuanced language understanding. That’s why quantized models are now widely used in production settings where performance matters but cost is critical.

This method works particularly well when:

- You deploy models on edge devices or small servers

- You use open-source models like LLaMA, Mistral, or Falcon

- You combine it with other cost-saving LLM tactics like distillation or routing

Popular tools like BitsAndBytes or AutoGPTQ make the quantization process faster and more stable.

LLMOps: What Is It and Can It Help Reduce LLM Costs?

If your product relies on LLMs, it's not just the model choice or prompt size that affects your budget. The way you structure, deploy, and manage the whole LLM pipeline plays a major role in long-term cost and efficiency. This is where LLMOps comes in.

LLMOps, a concept derived from MLOps, focuses on how to operate, monitor, and maintain large language model systems in production. It covers everything from infrastructure design to cost monitoring, performance tracking, and workflow orchestration. While it's still an emerging field, done right, it can help bring your LLM costs under control.

Cloud-based workflows offer cost levers

Most LLM pipelines today run in the cloud, often on platforms like AWS, Azure, or GCP. These environments come with tools that allow you to control cost at the infrastructure level, even without changing your models.

For example:

- AWS Step Functions can reduce the number of intermediate processing steps in the pipeline. Instead of triggering LLM calls from scattered services or manual tasks, you can define stateful logic that moves smoothly from one task to the next.

- AWS Lambda lets you build LLM workflows using lightweight serverless functions. Each function performs a specific step (e.g., prompt generation, response parsing, logging), runs only when triggered, and shuts down immediately after. This model helps avoid idle compute costs and supports pay-as-you-go billing.

These tools can also integrate with your internal systems, sending results to a database, triggering alerts, or passing structured outputs into another business process. When LLM usage is part of a larger automation setup, LLMOps makes it more predictable, maintainable, and cost-efficient.

Pay-as-you-go pricing models

One of the biggest advantages of cloud-native infrastructure is flexibility. You don’t need to commit to a fixed number of compute instances. Instead, you only pay for what you use. For AI workloads where usage can spike (e.g., after product launches or during investor reporting), this model keeps costs manageable.

For LLMs specifically, pay-as-you-go billing works especially well when:

- The system handles requests on demand

- It runs dynamic batch jobs

- Usage patterns vary across clients or time zones

This kind of elasticity is hard to achieve with fixed infrastructure, especially if you're not sure what load to expect.

Built-in cost monitoring vs. external tools

During our research and project work, we came across various third-party tools that offer LLM cost monitoring. These tools promise detailed usage analytics and budget controls. But the question remains: are they truly helpful, or just a nice-to-have feature dressed up for marketing?

In our experience, built-in monitoring tools from cloud providers are usually enough, at least for small to mid-size AI projects. For example, AWS provides detailed cost breakdowns per service, usage reports, and alerting features. While they may not give deep model-level insights (e.g., token-level breakdowns per endpoint), they help track the biggest cost drivers and catch problems early.

As for third-party tools, they can be worth testing, especially if your system includes many LLM calls across different platforms. But they’re not essential, and depending on the tool’s own pricing, they could even increase your total spend.

Our take

At Uptech, we’ve helped multiple clients implement efficient, cloud-native LLM pipelines. Some required strict cost control from day one. Others came to us after realizing their setup was burning through budget fast.

In both cases, LLMOps allowed us to optimize workflows, reduce waste, and bring structure to what otherwise would be a fragile and expensive architecture.

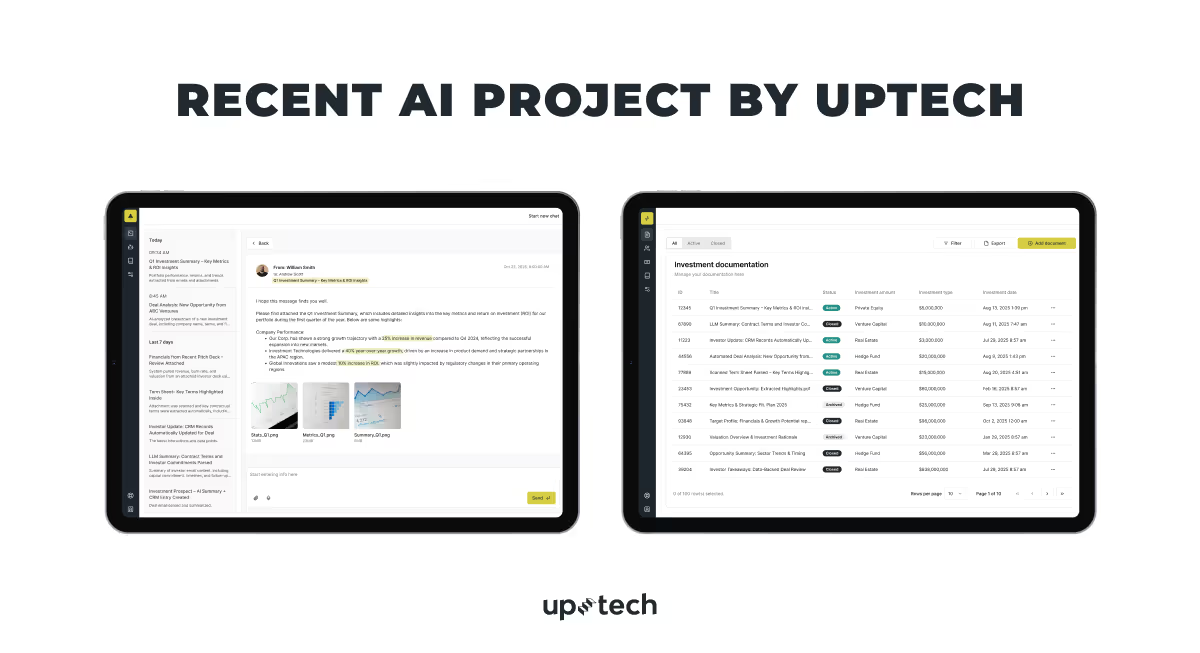

How Uptech Can Help You With LLM Cost Reduction

Uptech is a software development company with firsthand experience in AI projects, and we know how to approach any challenge related to AI, including such a thing as cost. Each AI product we’ve worked on has been unique, with its own budget, technical limitations, and business goals. That’s why we focus on designing solutions that strike the right balance between cost, performance, and long-term flexibility.

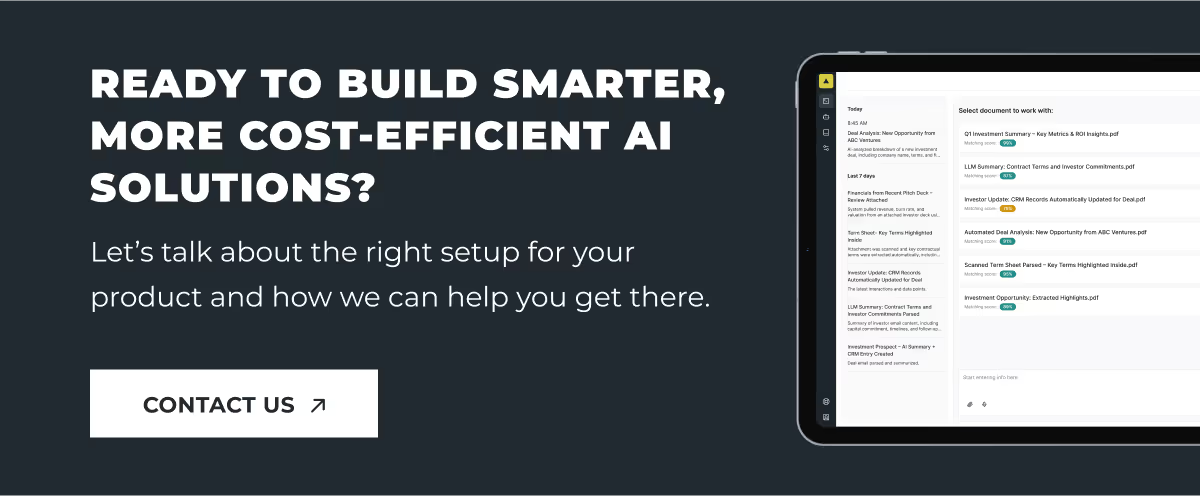

For example, when Presidio Investors, a U.S.-based fintech company, needed to reduce the manual work involved in processing investment data, they reached out to us. After hearing about our previous project for another investment platform, they asked us to bring AI data automation into their internal workflows.

At Uptech, we always tailor solutions to match the client’s technical needs and budget. In this case, we chose Azure OpenAI GPT-4o because it offers strong reasoning capabilities, but at the same time, it’s more cost-efficient than earlier GPT-4 versions. This helped us strike the right balance between precision, performance, and profitability.

Our team built a system that extracts key data from emails and attachments, converts it into structured JSON, and triggers CRM updates automatically. Everything runs in a secure private cloud setup. As a result, the client reduced manual work by 80 percent and now processes up to 100 investment deals per day.

Whether you’re building from scratch or scaling an existing product, reducing LLM costs doesn’t have to mean sacrificing quality. It just takes the right setup — and the right partner.

.avif)