Poor data quality is a primary driver of AI failure in many cases.

According to the research “The Root Causes of Failure for Artificial Intelligence Projects and How They Can Succeed”, more than 80% of AI projects fail not because algorithms are weak or infrastructure is lacking, but because data lacks quality, consistency, and production coverage, governance is unclear, and pipelines remain fragmented across siloed systems.

You can invest in advanced architecture.

You can staff top engineering talent.

But the initiative still fails if the data entering the system is incomplete, inconsistent, or misaligned with real production behavior.

Underinvest in data, and low-quality AI triggers a domino effect:

Poor data → model underperformance → unreliable outputs → workflow disruption → remediation cycles → rising costs → delivery delays → loss of organizational trust in AI

For leaders, this shifts AI from a technical challenge to an operational one. What data is collected, how it is governed, and when it is production-ready are strategic decisions with direct business impact.

Organizations that treat data quality as a prerequisite are able to move AI from pilots into reliable production use. Those who don’t end up stuck fixing the same problems over and over again, without ever solving the real cause.

My name is Artem Havryliuk, and I’m an ML Engineer at Uptech, a software development company. I have over three years of experience working on AI projects. Together with our ML Lead, Oleh Komenchuk, I’ll walk you through the AI data quality process, so you can better understand how it works and make more informed strategic decisions.

Before we go further, here's a quick summary of what this article covers:

- AI data quality is defined by six core dimensions: completeness, consistency, accuracy, timeliness, relevance, and label integrity — each directly impacts model reliability.

- Most AI work happens before modeling: data preparation includes access approvals, data discovery, extraction, labeling, cleaning, pipeline building, and ongoing maintenance.

- Label integrity is critical: if labels are wrong or inconsistent, the model learns incorrect patterns — and no algorithm can fix that later.

- Data preparation is iterative, not one-time: pipelines break, data changes, and models require continuous updates to stay aligned with reality.

- High-quality AI requires coordinated practices across three levels: strategic (investment, ownership, governance), technical (validation, versioning, monitoring), and organizational (domain expertise, labeling processes, cross-team alignment).

Let’s get started.

Why Data Quality Determines AI Success?

One of the most common misconceptions in AI strategy is that results depend mainly on the model you choose. In controlled benchmark tests, where models are evaluated on standardized datasets, this may be true. But in production environments, performance depends far more on data quality, coverage, and labeling integrity.

Even the most advanced models underperform when the training data is incomplete, inconsistent, or unrepresentative because AI systems learn patterns from data.

If the data contains gaps, noise, or biased examples, the model will reproduce those issues in its predictions.

Poor data quality directly affects model accuracy and reliability. Missing values hide important signals, inconsistent labels confuse the learning process, and unrepresentative datasets cause models to fail when they encounter real-world scenarios that were not present in training.

AI data quality becomes a boardroom issue because technical defects translate into operational risk and business consequences.

Here are some cases:

Real-world example: Amazon AI recruiting bias

One of the most well-known real-world examples is Amazon AI's recruiting bias.

In 2018, Amazon abandoned an internal AI recruiting tool after discovering that it systematically penalized resumes associated with women. The model had been trained on historical hiring data that reflected a male-dominated workforce in the tech industry. As a result, the system learned patterns that favored candidates whose profiles resembled past hires and downgraded resumes containing indicators associated with women, such as attendance at women’s colleges.

Once the bias was identified, Amazon discontinued the project before deploying it widely. The case became one of the most cited examples of dataset bias in machine learning and sparked broader scrutiny of AI hiring tools across the industry. It also pushed companies to introduce stronger fairness testing and dataset auditing practices when developing AI systems for high-stakes decisions like recruitment.

AI Data Preparation Process Explained

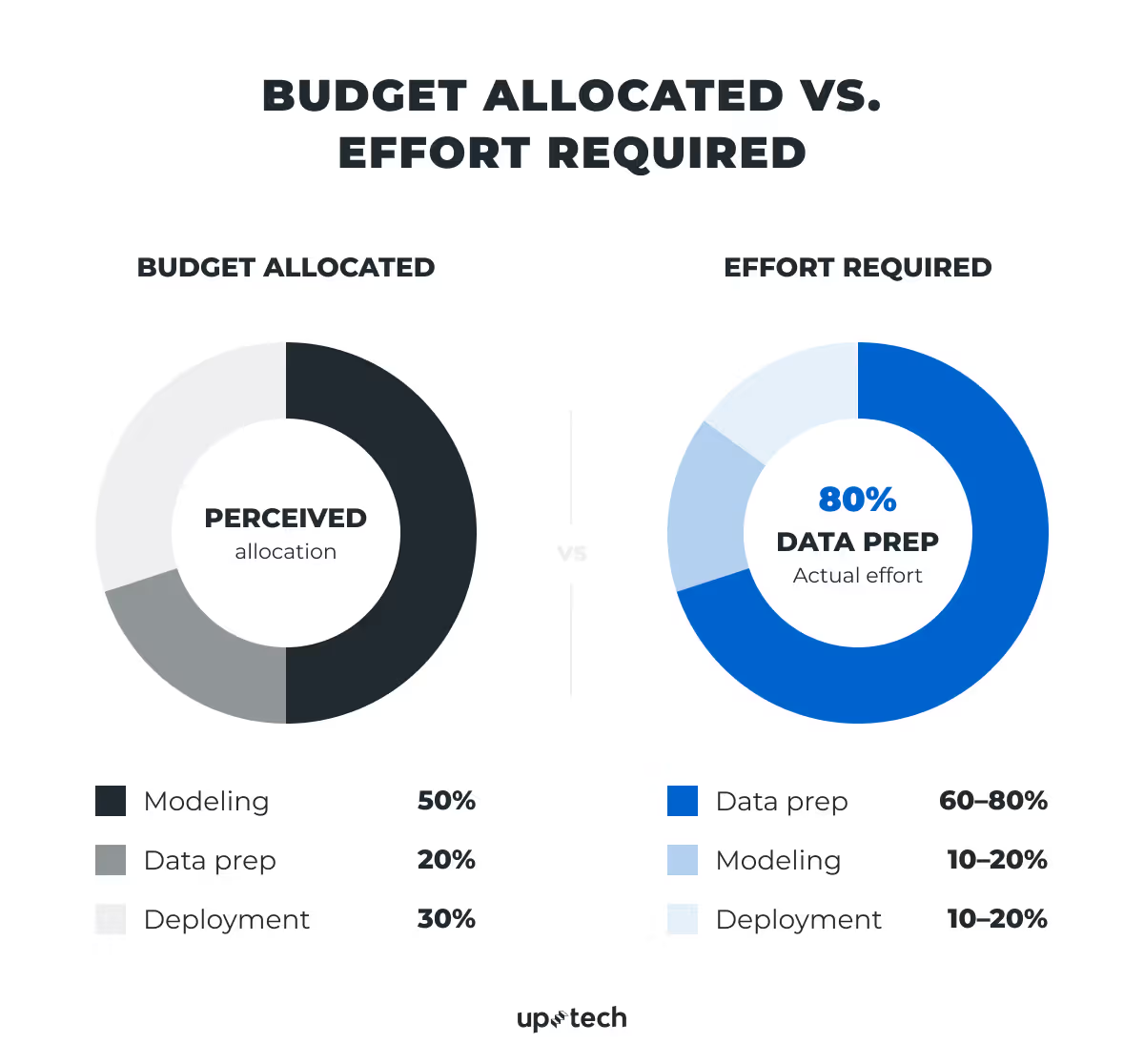

Data quality basically shapes the entire project. You’ve probably heard that 80% of AI work consists of data preparation. It sounds exaggerated, but in practice it holds up.

Model training is the part everyone sees. It is structured, measurable, and relatively fast. But before you get there, there is a large amount of invisible work that makes training possible.

Raw data is rarely ready to use. It needs to be cleaned, combined, validated, and often completely restructured. What looks like a dataset at first glance is usually just a starting point.

That is why model training might take days or weeks, while preparing the data behind it can take months.

This gap often catches teams off guard. Budgets are planned as if data is already usable. In reality, teams spend significant time just getting it into a workable state.

The takeaway is simple. Most of the effort in AI products is not in building models but in making the data usable enough for those models to work.

What are the key steps in AI data preparation?

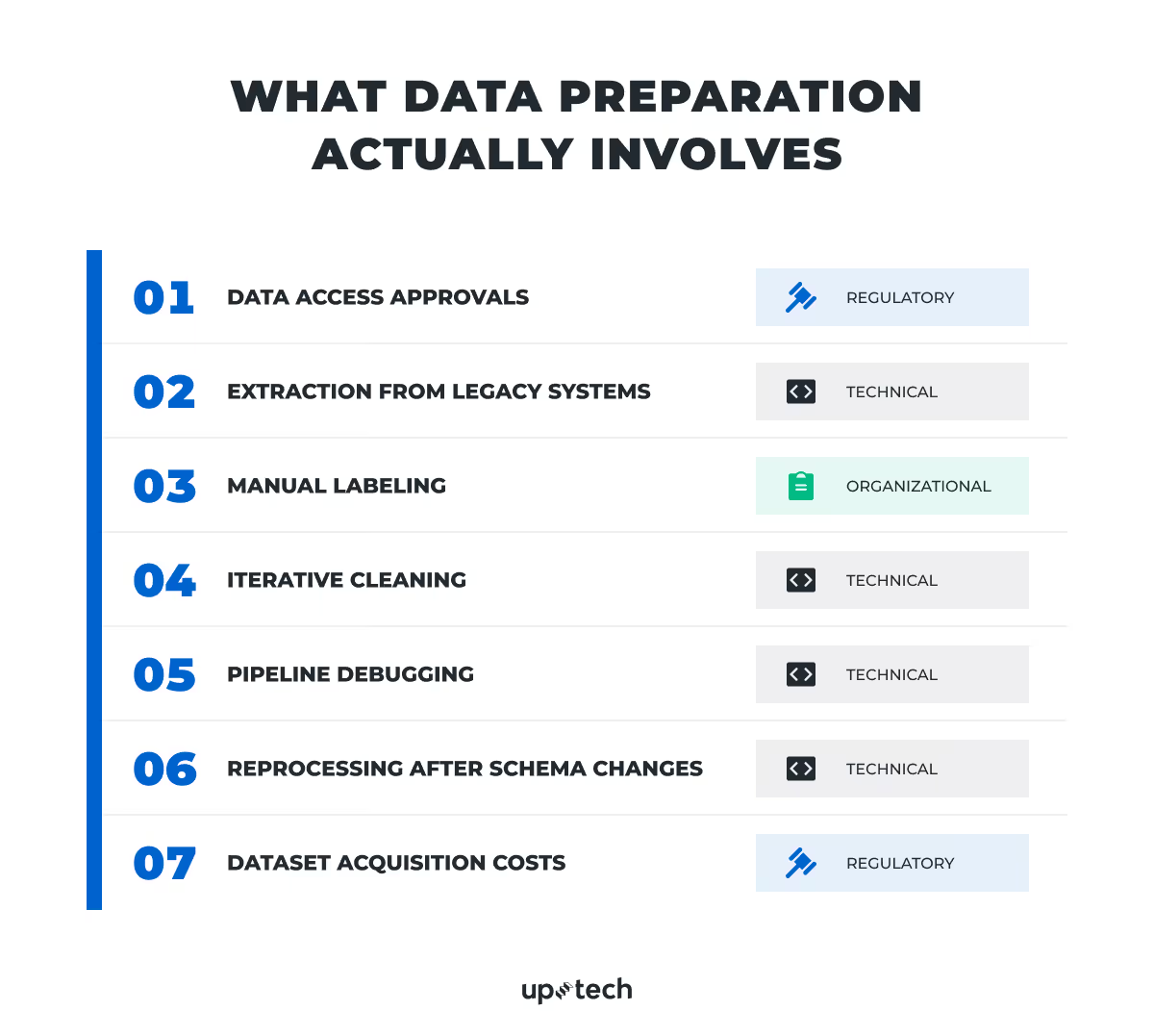

Data preparation absorbs effort across multiple layers: technical, organizational, and regulatory.

Data access approvals

In regulated industries, teams cannot simply start using data. Every dataset has to be reviewed before access is granted.

Organizations need to confirm whether the data can legally be used, whether it contains sensitive information, and who is allowed to access it. This involves multiple stakeholders: legal teams check usage rights, compliance ensures alignment with regulations, security defines how data can be accessed and stored, and business owners approve whether the data should be used at all.

Because each group evaluates risk differently, approvals often take time and require several iterations. In practice, this step can take weeks, and in stricter environments, even longer.

Finding and extracting the data

Once access is approved, the next challenge is simply locating the data.

In most companies, data is not stored in one place. It is spread across legacy systems, spreadsheets, logs, and internal tools built over many years. Teams first have to map where relevant data lives and understand how different systems relate to each other.

Extracting that data is not straightforward. Older systems may not support modern access methods, and engineers need to ensure they don’t disrupt live operations. At the same time, the data itself is often messy: inconsistent formats, unclear field names, and missing documentation.

This stage is less about coding and more about discovery. It often requires both technical work and conversations with people who understand how the systems evolved. It can take weeks or months and often shapes what is realistically possible for the AI project.

Labeling the data

If your model needs to learn from examples (supervised learning), you’ll need labeled data — meaning someone defines what “correct” looks like. For example, marking transactions as fraud or not fraud, or tagging messages as spam or not spam.

Not all models require this. Some can learn patterns without labels (unsupervised learning), or use pre-trained knowledge (like many modern LLM-based systems). But whenever you need the model to predict a specific outcome, labeling is usually required.

This usually means domain experts manually reviewing records and assigning labels. It is slow, expensive, and difficult to scale. To make it reliable, teams need clear guidelines, training, and ongoing quality checks so that different people label data consistently.

Labeling is not a one-time step. As new scenarios appear, guidelines need to be updated, and data needs to be relabeled or expanded. The quality of this process directly determines how accurate the model will be later.

Cleaning and preparing the data

Even after extraction and labeling, the data is not ready.

Teams start by fixing obvious issues like missing values, duplicates, and inconsistent formats. But once the model is trained, new problems appear — unusual patterns, outliers, or performance drops that trace back to hidden data issues.

This creates an iterative process: prepare data, train the model, analyze results, then go back and refine the data again. Each cycle improves both the dataset and the team’s understanding of it.

Cleaning is not a one-time step. It continues throughout the project.

Building and maintaining pipelines

To make data usable at scale, teams build pipelines that move and transform it from source systems into model-ready datasets.

These pipelines are more fragile than expected. Small changes in source systems — like renamed fields or updated formats — can break them or, worse, produce incorrect data without obvious errors.

When pipelines fail, progress stops. Engineers have to trace the issue, fix the logic, and restore the flow. Without proper monitoring and validation, this becomes a recurring bottleneck.

Handling changes over time

Data systems do not stay stable. Fields change, formats evolve, and new attributes are introduced.

When this happens, previously prepared data becomes inconsistent with new data. Teams often need to update pipelines and reprocess historical data to keep everything aligned.

These changes can also affect how the model interprets inputs, which sometimes requires retraining. Over time, maintaining consistency becomes an ongoing effort rather than a one-time setup.

Acquiring external data

In some cases, the required data is not available internally.

Teams then need to purchase it, which introduces a new layer of work: selecting vendors, negotiating contracts, passing legal and compliance checks, and integrating the data into existing systems.

Even after acquisition, external data often needs significant transformation before it can be used. It also introduces ongoing costs and dependencies that affect how the AI system operates.

What Defines AI Data Quality and How to Evaluate It?

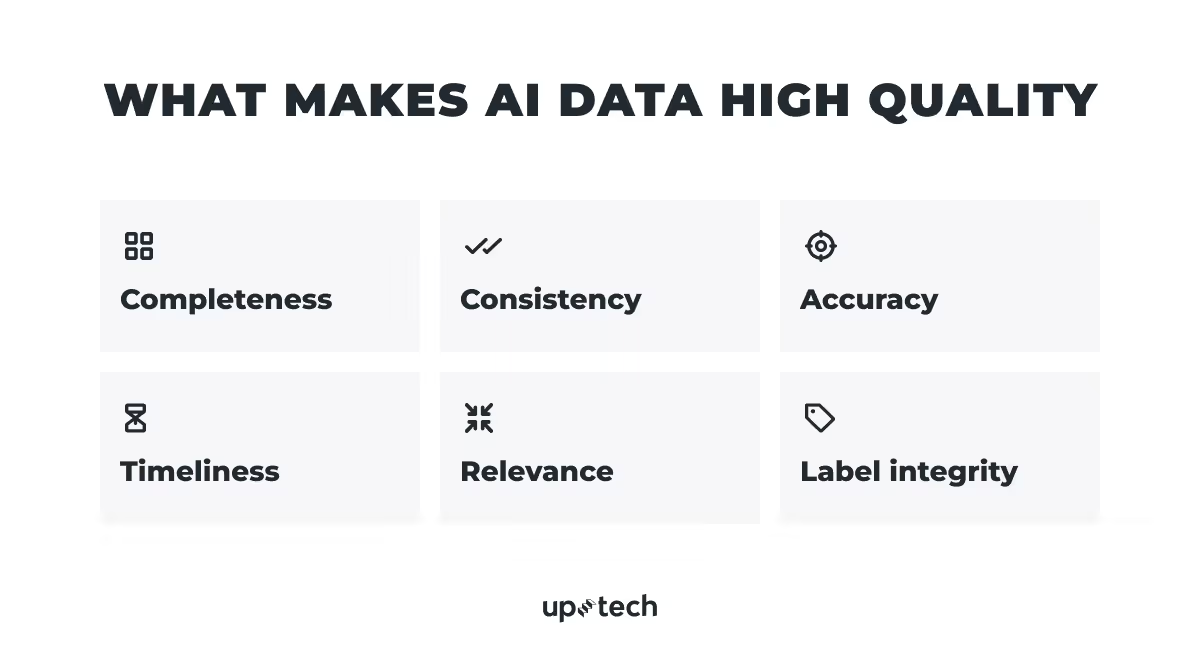

Several core dimensions define AI data quality: completeness, consistency, accuracy, timeliness, relevance, and label integrity.

Below, I'll break down what each of these means and how to assess them in practice.

Completeness

Start with the most obvious question: Do you actually have the data you need?

If key fields are missing or rarely filled in, the model learns from an incomplete picture of the business process. It has no way of knowing what it is missing.

For example:

- In credit risk modeling, missing income or employment data makes it difficult to estimate repayment capacity

- In fraud detection, missing device or location signals reduce the model's ability to detect suspicious patterns

- In healthcare AI, incomplete patient histories can distort predictions about treatment risk or readmission

Missing data almost never appears randomly. It clusters around new customers, international transactions, and edge-case workflows. These are exactly the scenarios where accurate predictions matter most. In practice, a field can exist in your CRM and still be empty for 40% of records.

How to assess it:

- Run a completeness report. Ask your BI team to measure what percentage of records contain a value for each field the model will rely on. Based on the results, you may need to improve data collection, adjust the model design, or narrow the project scope.

- Confirm with operations teams. The people responsible for entering data can explain why certain fields are often missing, whether it is a system design issue or a workflow one.

Consistency

Even when the data exists, the next issue appears quickly: does it mean the same thing everywhere?

Most organizations store data across CRM platforms, billing systems, product analytics, support tools, and financial databases. Each may record the same information differently.

For example:

- Revenue recorded in different currencies across regions

- Customer IDs that do not match between the CRM and billing systems

- "Active customer" is defined differently by product and finance teams

Humans can reconcile these differences manually. Models cannot. Conflicting definitions appear as contradictory signals, and the model learns from all of them equally.

In practice, aligning definitions of basic metrics like revenue or churn is where a significant portion of engineering time goes, before a single model is built.

How to assess it:

- Compare metric definitions across teams. Ask each department to independently write down how they define the core metrics relevant to the AI use case. If definitions differ, the model will train on inconsistent signals.

- Estimate the alignment effort. Ask your data team how long it will take to align datasets before modeling begins. If the answer is weeks, the root cause is organizational rather than technical. The business lacks standardized definitions for its core metrics.

Accuracy

At this point, even well-structured data can still be misleading if it doesn’t reflect what actually happened.

Operational datasets frequently contain duplicate customer records, outdated account statuses, incorrect timestamps, and misclassified transactions.

In reporting, these errors have a limited impact. Dashboards can show correct trends even with some inaccurate records. In machine learning, incorrect records do not just add noise. They actively teach the model wrong relationships.

For example:

- Incorrectly labeled fraud cases cause the model to miss real fraud patterns

- Inaccurate churn labels hide customers at risk

- Misclassified transactions distort purchasing behavior patterns

Label errors, where the outcomes the model learns from are themselves incorrect, are covered in the Label Integrity section below. They represent a distinct and often more damaging category of accuracy problem.

How to assess it:

- Review how outcomes are recorded. Ask whether labels are based on verified events or inferred automatically by system rules.

- Check for duplicates and contradictions. These are the most common accuracy issues and among the easiest to quantify before modeling begins.

- Validate a sample manually. Reviewing 20 to 30 records with a domain expert regularly surfaces problems that automated checks miss.

Timeliness

Even accurate data loses value over time.

Models learn from historical snapshots. As the business evolves, those patterns slowly drift away from reality. The model, however, keeps applying what it learned as if nothing has changed.

This gap often goes unnoticed in testing, because test data comes from the same period as training data. It only becomes visible after deployment.

For example:

- Churn models trained before a major product redesign

- AI antifraud systems trained on outdated attack patterns

- Recommendation engines built on historical usage that no longer reflect current preferences

This is known as concept drift. The real-world behavior the model was trained to predict shifts gradually until its assumptions no longer hold. Performance declines slowly, often going unnoticed until business impact is already visible.

How to assess it:

- Check when the training data was collected. Map it against major business events such as product launches, pricing changes, and policy updates. Any significant event after the data cutoff is a risk factor.

- Review how often key variables are updated. Some fields refresh daily. Others change only when someone intervenes manually. Models that depend on stale inputs produce stale outputs regardless of how well they were trained.

- Define a retraining schedule before deployment. How frequently the model needs retraining depends on how quickly behavior changes in your domain. This needs to be owned and funded before go-live, not treated as an afterthought.

Relevance

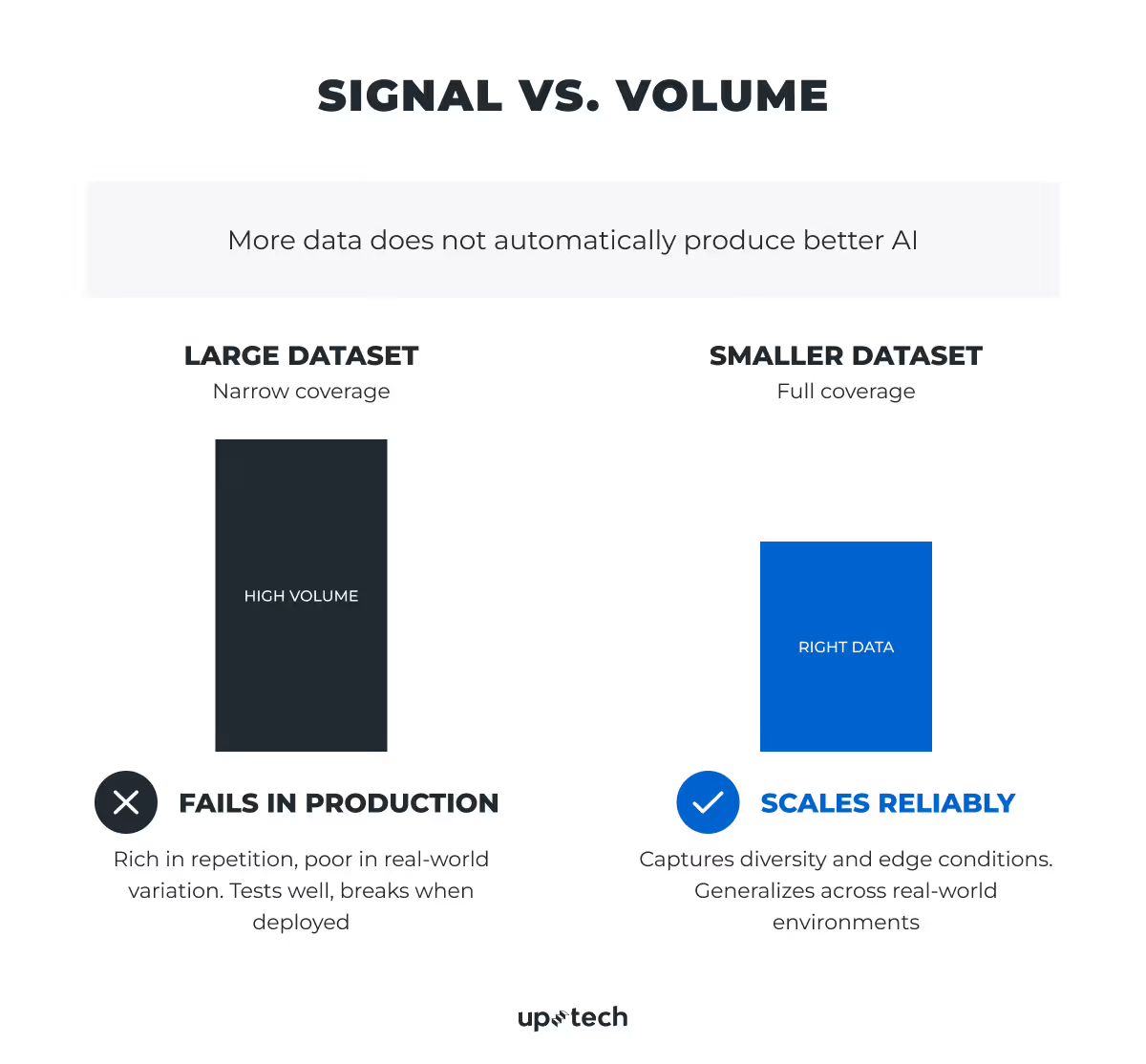

At this stage, the question shifts from “how much data do we have?” to “are we using the right data at all?”

More data does not automatically improve model performance. A smaller dataset built around the right variables will consistently outperform a larger one filled with the wrong ones.

For example:

- Churn prediction depends on product usage behavior, not demographic fields that are easy to collect

- Fraud detection depends on transaction patterns and device signals, not customer tenure or account type

- Equipment failure prediction depends on sensor readings and maintenance history, not procurement records

In most projects, teams start with hundreds of variables. After analysis, only a small fraction meaningfully contributes to predictions. The rest is data collected for operational convenience. Including it gives the model more noise to learn from and can reduce accuracy on the cases that matter most.

The most predictive variables are often the hardest to collect. Product usage signals require instrumentation. Device fingerprints require tracking infrastructure. Maintenance histories require years of consistent logging.

Organizations tend to have abundant data on what is easy to store and sparse data on what actually drives outcomes.

From an engineering standpoint, the question is not simply:

“Do we have enough data?”

It’s:

“Do we have the right data across the full range of real-world conditions?”

This distinction often determines whether AI initiatives scale or stall in post-deployment remediation.

How to assess it:

- Start from the business decision, not the dataset. Identify the signals a skilled human expert would use to make this decision manually. Then check how much of that information exists in your data.

- Run a feature importance analysis. Ask your data team to build a pilot model and rank variables by predictive contribution. This regularly reveals that assumed-important variables contribute little, while unexpected ones carry most of the signal.

- Identify instrumentation gaps. If the most predictive signals are not currently collected, the organization must either build collection infrastructure before modeling begins or adjust what the model is expected to do.

Label integrity

Finally, there is one dimension that tends to outweigh all others: the quality of labels.

For supervised machine learning, label integrity is often the most critical data quality dimension. Labels define what "correct" looks like. Everything else in the dataset is context. If the labels are wrong, the model learns to be wrong confidently.

Labels are the outcomes the model trains on: fraud vs. legitimate, churned vs. retained, approved vs. rejected, and high-priority vs. routine.

Most organizations infer these outcomes from operational processes rather than observing them directly. Operational processes were designed to run a business, not to teach a machine what reality looks like.

For example:

- Fraud is labeled only after a chargeback, meaning undisputed fraud is recorded as legitimate

- Support tickets marked resolved because the agent closed them, not because the issue was fixed

- Diagnoses that vary between clinicians reviewing the same case

Improving label quality almost always produces larger performance gains than changing the algorithm. Yet most AI investment goes into model selection, not label validation.

How to assess it:

- Trace the origin of every label. Ask your data team how each label was generated, which system produced it, what rule triggered it, and whether a human verified it. Automatically assigned labels with no verification step are high risk by default.

- Check for label drift. Split the labeled dataset by time period and compare distributions. Unexplained shifts in label ratios often indicate inconsistent labeling rather than a change in behavior.

- Run a manual audit with a domain expert. Review 30 to 50 random records with someone who understands the business outcome deeply. This is the fastest way to surface systematic labeling errors that automated checks will not catch.

What Are the Best Practices for AI Data Quality?

AI data quality doesn’t come from one fix. It depends on how you plan, build, and manage data across the whole system. Below are the key practices that make AI work reliably in production.

Build data infrastructure before AI models

Most AI budgets overinvest in the model and underinvest in the data systems that make the model work.

The result is prototypes that perform well in demos and fail in production. Data infrastructure is not a background cost. It is the foundation on which the entire initiative depends.

Before approving any AI project budget, ask what percentage is allocated to data preparation, pipelines, and AI governance. If the answer is under 30%, the budget does not reflect how AI projects actually work.

Assign ownership to every dataset

High-quality data does not maintain itself. Every dataset feeding a production AI system needs a named owner who is responsible for its quality, accuracy, and fitness for use. Without clear ownership, quality problems accumulate, and no one has the authority or incentive to resolve them.

Before the project starts, confirm that every critical data source has a designated owner. If it does not, assign one.

Create a cross-functional data council

Data quality decisions require input from product, operations, compliance, and domain leadership, not just engineering. Definition conflicts, labeling standards, and data usage policies need a cross-functional forum to resolve them. Without it, fragmentation grows silently as AI adoption expands across the organization.

Establish a standing group that includes representatives from each team that owns or consumes critical data. Its job is to align definitions, resolve conflicts, and review data quality before major AI initiatives launch.

Automate data validation

Data entering the model should be checked continuously for errors, missing values, and unexpected changes. Ask your team whether this validation is automated or manual. Manual validation does not scale.

Track and version all training data

The team should be able to tell you exactly what data any deployed model was trained on. If they cannot, the project lacks the traceability needed for auditing, debugging, and regulatory review.

Monitor data and model drift

Customer behavior, market conditions, and operational processes change over time. Ask your team what system is in place to detect when the model's training data no longer reflects current reality, and who is responsible for acting on it.

Ensure label quality is controlled

Labeling errors go directly into the model behavior. Require evidence that labeling consistency is being measured and audited, not assumed.

Document every production dataset clearly

Every dataset used in production should have written documentation covering its source, collection method, labeling logic, and known limitations. Without this, datasets cannot be audited, reused, or defended in a regulatory review.

Include domain experts at every stage

Engineers understand models. Domain experts understand the business reality that the model is supposed to reflect. The fraud analyst, the credit officer, the clinical specialist — these are the people who can tell you whether a label reflects reality or an operational approximation. Their involvement should not end at project kickoff.

Treat labeling as an ongoing process

As the model operates in production, it will encounter scenarios it was not trained on. Labeling pipelines need to evolve alongside usage. A model that was well-labeled at launch and never updated will degrade as the world it operates in changes.

High AI data quality does not come from isolated fixes. It requires coordinated investment across strategy, operations, governance, and engineering. Organizations that build these practices systematically create the conditions where AI scales reliably, predictions stay trustworthy, and investment produces sustained business value rather than a series of expensive pilots that never reach production.

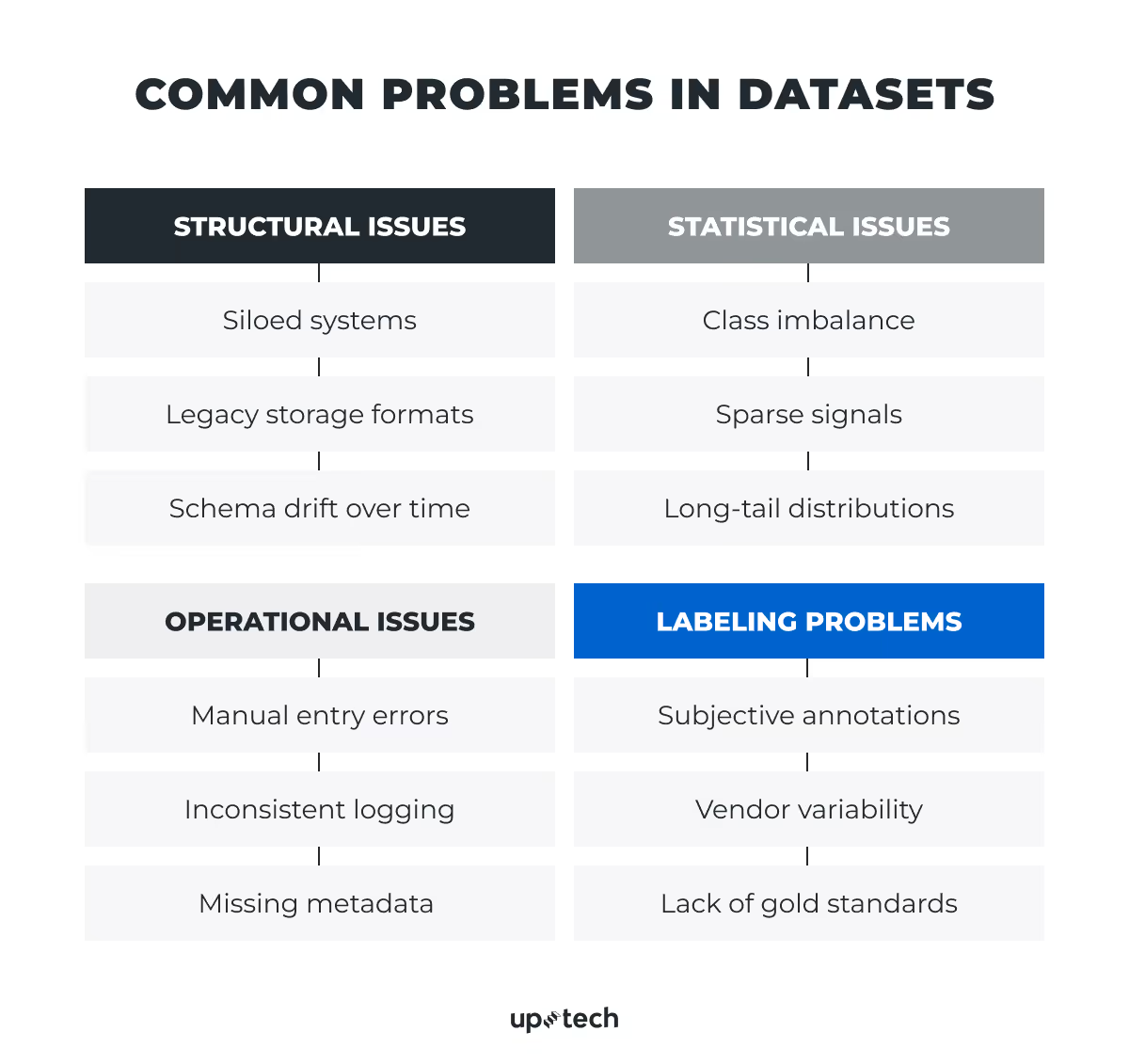

What Are the Most Common Problems in AI Datasets?

If we look at common problems in real-world datasets, most of them have the same root cause: production data is rarely designed for machine learning.

Most datasets are byproducts of operational systems. They were created for transactions, compliance, reporting, or monitoring, not for training models. Because of that, they often contain structural gaps, statistical distortions, and workflow artifacts that can reduce model reliability.

Let’s break down the most common issues, so you know what to look for when assessing data quality.

Structural issues

Enterprise data is split across systems that were never designed to work together. CRM, ERP, billing, and product analytics each capture a partial view of the same customer or transaction. Flat files, proprietary exports, and legacy databases require significant engineering work before they can be used for modeling. Fields get renamed, formats shift, and definitions evolve independently across teams over the years, with no record of when or why.

Possible consequences: A model trained on fragmented or historically inconsistent data learns patterns that do not reflect the full business reality. It produces predictions that are confident but incomplete. In production, this surfaces as systematic underperformance on the customer segments or transaction types that happen to be underrepresented in the system the model was trained on. Integration gaps discovered mid-project routinely add weeks to delivery timelines and require additional budget that was not scoped.

What to do:

- Assign clear ownership of each data source before the project is approved. If there is no designated owner, accountability for data quality falls between teams, and issues go unresolved.

- Treat data integration as a budget line. Ask your project lead what percentage of the timeline is allocated to data preparation. If the answer is under 40%, the estimate is likely optimistic.

- Make the cross-system definition decisions that only leadership can make. When finance and product define "active customer" differently, that conflict cannot be resolved at the engineering level. If it is not decided explicitly before modeling begins, engineers will decide it for you using technical criteria rather than business logic.

Statistical issues

Production datasets reflect the frequency of real-world events, which means rare but critical outcomes are underrepresented by design. Fraud, equipment failures, and adverse medical events make up a small fraction of total records. Key predictive signals may appear only under narrow conditions. Edge cases sit in the tail of the distribution and are statistically insignificant in volume but disproportionately significant in business impact.

The result is a dataset that is numerically dominated by normal, unremarkable events. And that is exactly what the model learns to predict.

Possible consequences: A model trained on imbalanced data will optimize for the majority outcome because that is where the statistical reward is. It learns that predicting "legitimate transaction" or "no failure" is almost always correct, because in the training data, it almost always was. In production, this surfaces as a model that performs well on overall accuracy metrics while systematically failing on the outcomes that justified the investment. Fraud slips through. Equipment fails without warning. High-risk cases are misclassified as routine. By the time this pattern is visible in business results, the model has often been in production long enough that retraining requires significant effort.

What to do:

- Require performance reporting on the outcome that matters, not overall accuracy. When commissioning an AI project, specify that results must be reported separately for the specific outcome the model was built to catch, whether that is fraud, churn, equipment failure, or another rare event. A model that is 99% accurate on a dataset where 1% of cases are fraudulent may be catching no fraud at all. Overall accuracy hides this completely.

- Before sign-off, ask one question: how does this model perform on our highest-risk cases? If the team cannot answer this with specific numbers, the model has not been properly evaluated. Do not approve deployment until that answer exists.

- Set performance thresholds for edge cases as a project requirement from day one. Leaders define what "good enough" means for the business. If your highest-risk customer segment or your most costly failure mode is not explicitly included in the success criteria at project kickoff, the engineering team has no obligation to optimize for it. By the time you discover the gap, the model is already built.

Operational issues

Operational data carries the fingerprints of every workflow decision, system change, and human behavior that produced it. Manual entry introduces typos and inconsistent interpretations at scale. Logging practices evolve as systems are updated, meaning some events are tracked carefully, and others are not tracked at all. Metadata, the timestamps, context fields, and identifiers that give records meaning, are frequently incomplete or missing entirely.

None of this is anyone's fault. These systems were built to process transactions, not to train models. But the consequence is that a significant portion of preparation time goes into reconstructing the context that was never captured in the first place.

Possible consequences: A model trained on operationally noisy data learns from corrupted signals. Manual entry errors teach the wrong relationships. Inconsistent logging means the model sees some time periods or event types clearly and others barely at all, producing predictions that are reliable in some contexts and unreliable in others without any obvious boundary between the two. Missing metadata limits how deeply the model can reason and makes it difficult to explain or audit its decisions later, which becomes a compliance and governance problem as AI use expands.

What to do:

- Before approving the project, ask whether data entry is validated at the point of collection. If your operational systems allow free-text input, inconsistent formats, or optional fields for data the model will depend on, those are structural problems that need to be addressed at the source. Cleaning data downstream is expensive and never fully reliable.

- Ask your operations lead which events or workflows are tracked inconsistently across regions, teams, or time periods. They will know. This conversation takes an hour and regularly surfaces gaps that would otherwise cost weeks of engineering time to discover.

- Treat metadata completeness as a governance requirement, not a technical nicety. Timestamps, context fields, and source identifiers are what allow the organization to audit model decisions, investigate errors, and demonstrate compliance. If the data the model trains on cannot be traced back to its origin, the organization cannot fully account for the decisions the model makes.

Labeling problems

Labels are the outcomes the model learns from. They define what correct looks like. When different people label the same data differently, or when no formal standard exists for what a label should mean, the model trains on contradictions. It learns unstable patterns that perform inconsistently in production.

The most dangerous version of this problem is the absence of a gold standard: a validated reference set of correctly labeled examples that the team can use to measure consistency and catch errors. Without it, there is no way to know how much noise is in the labels, and therefore no way to know how much of the model's underperformance comes from the labels themselves rather than the algorithm.

More labeled data does not fix this. It scales the noise.

Possible consequences: A model trained on inconsistently labeled data learns different things from different parts of the dataset. In production, this surfaces as unpredictable behavior: the model performs well on cases that resemble the consistently labeled portion of the training data and poorly on everything else. Labeling problems are also among the hardest to diagnose after deployment because the model's outputs look plausible. It is not obviously broken. It is confidently wrong in ways that require domain expertise to detect. By the time the pattern is identified, significant decisions may have already been made on unreliable outputs.

What to do:

- Ask who defined the labeling rules and when they were last reviewed. Labeling guidelines that were written at project kickoff and never revisited will drift as the business evolves. If no written guidelines exist, that is the first problem to solve before any annotation work continues.

- Require a labeling consistency audit before the model is trained. Ask your team to have two independent reviewers label the same sample of records and compare results. If agreement is low, the guidelines are ambiguous, and the labels cannot be trusted at scale. This audit takes days and can prevent months of rework.

- Do not approve the scaling of labeling work until quality is verified on a small sample. The instinct when behind schedule is to label more data faster. Resist it. Scaling inconsistent labels scales the problem.

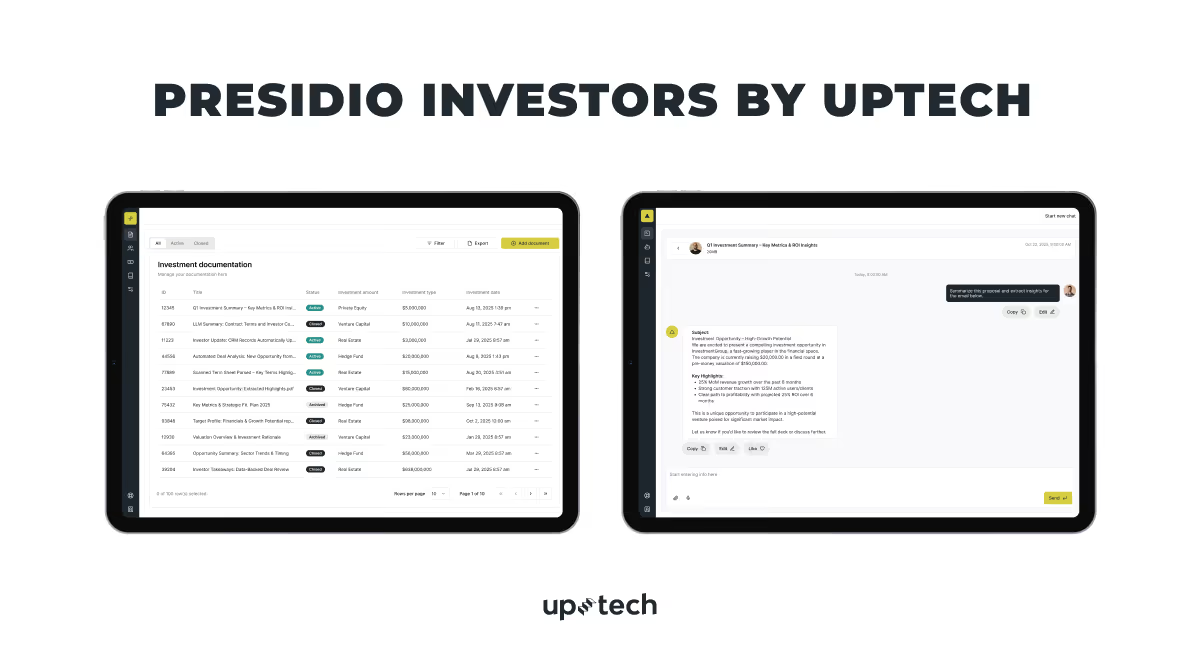

Presidio Investors: Data Challenges We Owned

Uptech partnered with Presidio Investors to address a growing challenge: large volumes of unstructured investment data slowed down analysis and made it difficult to maintain consistent, high-quality decision-making. Deal information came in different formats, requiring significant manual effort to clean, structure, and interpret before it could be used.

To solve this, Uptech built an AI-powered solution that automatically extracts, structures, and analyzes investment data. The platform standardizes inputs across sources, turning fragmented information into a consistent, usable dataset and enabling faster, more reliable evaluation of opportunities.

As a result, manual data processing was reduced by 80%, and the team can now process up to 100 deals per day. This shift not only increased throughput but also improved data consistency and visibility across the pipeline.

Conclusion

AI doesn’t create clarity on its own. It amplifies whatever foundation you give it.

For teams building real products, this is where the difference shows. Some organizations keep chasing better outputs. Others step back and build systems that make good outcomes repeatable.

That’s the shift: from experimenting with AI to operating it.

If you have an AI project idea and need help with data preparation and management, get in touch — we’ll help you turn it into something that actually works.

Frequently Asked Questions

What is AI data quality?

AI data quality refers to how well your data supports accurate, reliable, and consistent model performance. It’s how the data reflects real-world conditions, is complete, properly labeled, and aligned with the business problem the model is solving. Poor data quality leads directly to unreliable outputs and failed AI initiatives.

What are the 6 dimensions of data quality in AI?

The six core dimensions of AI data quality are:

- Completeness — Are key fields filled and available?

- Consistency — Do data definitions match across systems?

- Accuracy — Does the data reflect real-world events correctly?

- Timeliness — Is the data up to date and relevant?

- Relevance — Does the data actually help predict the outcome?

- Label integrity — Are the labels (outcomes) correct and trustworthy?

Together, these dimensions define whether your data is usable for production-grade AI.

What is label integrity, and why does it matter?

Label integrity refers to the correctness and consistency of the outcomes your model learns from (e.g., fraud vs. legitimate, churn vs. retained).

It matters because labels define what “correct” means. If labels are wrong, the model learns incorrect patterns and produces confident but flawed predictions. In many cases, improving label quality has a bigger impact than changing the model itself .

What is concept drift?

Concept drift is the gradual change in real-world behavior that makes historical data less relevant over time.

For example, customer behavior, fraud patterns, or market conditions evolve — but the model continues to rely on outdated patterns. As a result, performance declines after deployment unless the model is retrained regularly.

How much of an AI project is data preparation?

In practice, up to 80% of an AI project can be spent on data preparation.

This includes data collection, cleaning, labeling, integration, validation, and pipeline setup. While model training may take days or weeks, preparing usable, high-quality data often takes months.

What is the most common cause of AI project failure?

The most common cause of AI project failure is poor data quality.

More than 80% of AI projects fail not because of weak models, but because data is incomplete, inconsistent, poorly labeled, or not aligned with real-world conditions. Without high-quality data, even the best models cannot perform reliably.