Many startups and small to mid-sized businesses want to add AI to their product but struggle to understand how a real AI project development flow looks in practice. The idea sounds promising, yet the same questions appear early on: Do we really need AI here? How long will this take? And how much budget should we prepare for?

From my experience as an ML engineer at Uptech, these questions come up in almost every conversation with founders and product teams. The problem is rarely AI itself. More often, teams lack a clear view of the process, underestimate early decisions, and discover too late how strongly those decisions affect timelines and costs.

In this article, I walk through the development flow for a real healthcare AI project designed to detect harmful drug interactions and support medication tracking, including the discovery phases and AI PoCs built during this time. This is not a theoretical framework. It reflects how AI projects actually start, where uncertainty appears, and how teams decide whether to move forward or stop.

If you plan to integrate AI into your product and want realistic expectations around time, cost, and effort, this breakdown will help you see the full picture before you commit resources.

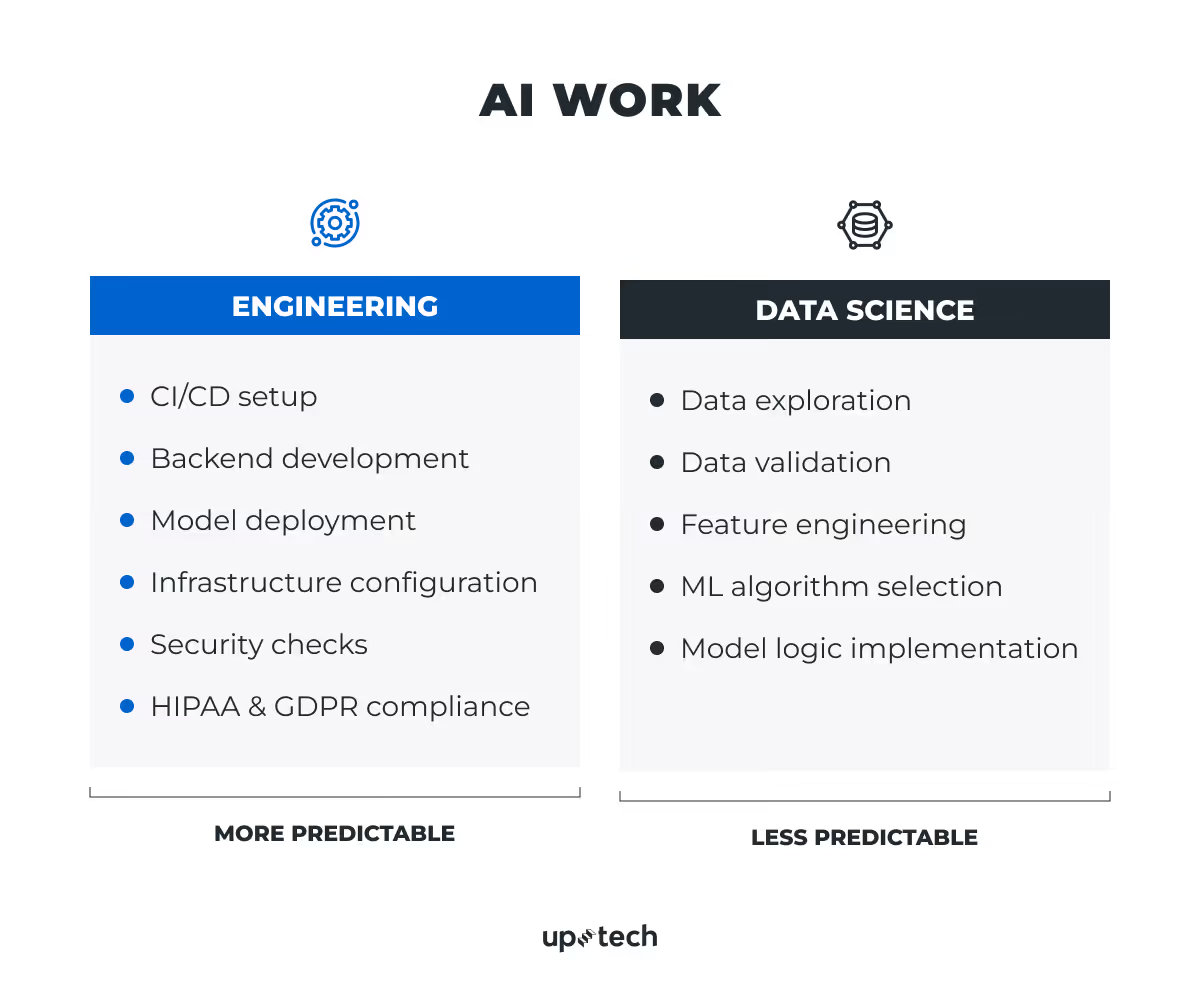

How AI Work Is Divided in Real Projects

When it comes to pretty much any AI project, the work usually splits into two large areas: engineering and data science. I need to mention this distinction early because it explains why some parts of an AI project feel straightforward, while others remain uncertain even with a clear plan. Let’s cut to the chase.

The engineering part of AI projects

Engineering work covers everything required to run AI inside a real product.

This includes things like:

- CI/CD setup. Automation of build, test, and deployment steps so updates reach environments in a controlled and repeatable way.

- Backend development. Implementation of APIs and services that connect the AI model with the product, users, and data sources.

- Model deployment. Packaging and release of the trained model so it can serve predictions in real-world conditions.

- Infrastructure configuration. Setting up cloud resources, compute, storage, and scaling rules required to run the system reliably.

- Security checks. Protection of data, secured connections, and compliance with the best security practices to reduce risk and limit exposure.

- Compliance with regulations such as HIPAA or GDPR. Alignment of data handling, storage, and access with regulatory requirements relevant to healthcare and user privacy.

By the way, we also have a technical guide to HIPAA and GDPR compliance that helps teams understand what must be addressed from the start and what can safely wait until later.

This part often follows familiar software practices. The scope is easier to define, tasks are concrete, and timelines stay relatively stable. For that reason, engineering work tends to be more predictable in an AI project in terms of effort, time, and cost.

The data science work of AI projects

Data science work follows a different logic. Even when the goal looks clear at the start, uncertainty remains. Issues often appear only after the work begins. Teams may uncover things like data leaks, bias in the dataset, strong class imbalance, or data that contains too much noise. In some cases, the data provided by the client turns out to be incomplete or unsuitable for the intended use case. These problems rarely surface during early discussions, but they can change both timelines and budgets later on.

In practice, data science work includes such stages as

- Data exploration. A first review of the data to understand structure, volume, and obvious issues.

- Data validation. Checks for missing values, imbalance, leakage, noise, and data quality risks.

- Feature engineering. Selection and transformation of inputs that affect model results.

- Selection of the appropriate ML algorithm type. Choice of a model class based on data type, quality, and project constraints.

- Implementation of model logic. Model code, experiments, and result checks against real-world expectations.

For most of the SMB projects, the AI part of the projects usually involves 1-2 ML engineers, depending on task complexity. These are full-stack ML engineers who cover data science tasks and MLOps responsibilities during the early stages. Even with a small team, this stage often defines whether the AI idea is viable at all.

Beyond the AI-specific scope, a typical project involves 6-8 specialists overall. This usually includes backend and frontend engineers, QA, and product roles.

Now that we know the differences between predictable engineering work and uncertain data science work, let’s move on to the development process of AI projects based on our experience.

Internal Healthcare AI Project Example

At Uptech, we built an internal healthcare proof of concept (PoC) around medication tracking and drug-drug interaction detection. The idea was simple: users could log what meds they take, monitor dosage over time, and get a warning when certain combinations looked risky.

It sounds like a rules problem on the surface. In practice, it turned out to be more complicated than that. Drug interactions are not binary by nature. Risk shifts based on what gets combined, how much, and the broader clinical context. The rule-based approach started to buckle as the logic grew, so we moved toward an AI classification model that estimates interaction probability instead of returning a hard yes or no.

We also added a conversational AI layer through an OpenAI LLM. Users could ask plain questions about their medications and receive answers based on what they had already logged. The AI chatbot was never meant to replace a doctor, but it made the relevant information far easier to access and understand.

Because this stayed internal as a PoC, some implementation details have been deliberately kept vague. Even so, the decisions, constraints, and trade-offs covered in this article come directly from how the project unfolded in practice.

The next section walks through the AI lifecycle step by step, with the reasoning behind each choice explained along the way.

6 Key Stages of an AI Project Lifecycle

Based on this experience, I can safely say that AI projects don’t start with models or code. They have a clear sequence of stages that help teams reduce risk and avoid costly mistakes.

Following the development flow of the internal healthcare AI project I use as an example, pretty much any AI project lifecycle can be broken down into the next 6 stages:

- Problem definition and success criteria

- Decision if AI is needed

- Data work and metric definition

- Baseline model development, selection, and validation

- AI model deployment to production

- Post-release iterations and model improvement

I will walk you through each in more detail.

Stage 1. Problem definition and success criteria

Like every AI project we worked on, this one started with problem definition. At this stage, our goal was to clearly understand what problem the product needed to solve and whether AI could create real value in this specific case.

Before any technical work begins, the team defines what success looks like. This step shapes every decision that follows. The key questions are:

- What should change after AI appears in the product?

- How will we know that the change happened?

To answer this, the team defines clear business metrics. These may include user retention, which shows how often users return to the product, or satisfaction rate, which reflects user response to the change. In some cases, app ratings, reviews, and feedback trends also play a role. With these metrics in place, we can create a shared reference point and avoid subjective judgments later.

In our case, the problem was not limited to tracking a single medication. The product needed to support multiple medications taken simultaneously and identify potentially harmful drug–drug interactions in a reliable way. The key change we aimed for was the ability to detect risky combinations dynamically and surface warnings early, without relying solely on manually defined rules.

Success for this project was defined through a combination of product and quality-related criteria. On the product side, we evaluated whether the system could analyze medication combinations consistently and flag potential negative interactions without manual review. On the quality side, we focused on prediction accuracy and stability of classification results across different input scenarios.

Feedback from early reviews also played a role. It helped validate whether the generated visuals remained clear and usable for those who take multiple medications and rely on the app for safety-related insights.

These success criteria guided all later decisions. They helped the team stay focused on the actual problem and reduced the risk of building an AI solution that worked technically but failed to deliver real value.

Stage 2. Decision if AI is needed

Frankly, not every problem needs AI.

In practice, teams often discover that a simple software solution works better than a complex ML pipeline. For example, when a product needs to know the location of its users, a simple user-selected field such as “country” may solve the problem faster and with less risk than trying to infer location from text using ML (Machine Learning) and NLP (Natural Language Processing).

This stage focuses on a simple question: “Can traditional software engineering solve the problem, or does it require AI?” AI usually makes sense only when the task involves complexity that rules, logic, or standard algorithms cannot handle well. Only in these cases does AI bring real value.

At Uptech, this decision takes place during pre-sale or the Discovery phase. The team defines the scope of the problem, clarifies expected outputs, and evaluates whether AI is the right tool. In some cases, the conclusion is clear: a simpler approach is faster, cheaper, and more reliable.

For the medication tracking and drug-drug interaction PoC, AI became necessary because of the number of possible medication combinations and the need to assess their potential harm probabilistically rather than through fixed rules. Rule-based logic could not scale reliably to that level of variability, which made an AI classification component a more viable option.

This stage also included early risk identification. We considered risks such as limited data availability, data changes over time, and unexpected input combinations that the system might not handle correctly. These risks were documented and discussed internally before moving forward with the PoC.

During pre-sale or Discovery, the team also decides on the general technical direction. This may involve classical ML development, deep learning, or a hybrid approach that combines ML, rule-based logic, or LLMs (Large Language Models). Given the nature of the task, which focused on drug-drug interaction detection, the focus shifted toward a classification-based ML approach, complemented by an LLM layer for conversational interaction, with flexibility to combine it with rule-based constraints where needed.

Stage 3. Data work and metric definition

This is the most time-intensive stage of an AI project. In practice, data work can take up to 80% of the entire project effort. And here’s why.

Data preparation

How do things work here? Everything starts with data collection and preparation. The team usually requests available data from the client and checks whether additional data sources exist. When possible, public datasets are reviewed, along with licensing terms that allow legal use. This step often defines how far the project can go.

At the same time, the team reviews existing research. Papers, experiments, and applied approaches help determine whether this problem has already been solved and, if so, under what conditions. In some domains, such as remote sensing based on satellite data, many experiments already exist. In these cases, it makes little sense to repeat work that others have validated. Existing approaches can often guide product decisions or reduce experimentation time.

In practice, data preparation includes several concrete tasks:

While these steps may look simple, they define model behavior more than algorithm choice. Here, the rule is “garbage in, garbage out.” Poor preparation leads to unstable results, even when the rest of the pipeline looks correct.

Metric definition

The metric definition happens in parallel. This includes both ML metrics and business metrics.

Why do we need these metrics? Well, to make sure that the model works as planned and provides the expected reliable output. When AI makes predictions, there can be mistakes that usually fall into two categories.

A false positive happens when the model says something is present, but it is not. For example, the system flags a harmful drug-drug interaction that is not clinically significant. This leads to unnecessary follow-ups and wasted time.

A false negative happens when the model misses something that is actually there. For example, the system does not detect a harmful interaction between two medications that should trigger a warning. This can be more dangerous, especially in healthcare or risk-sensitive products.

Most AI projects involve a trade-off between these two types of errors. Reducing false positives often increases false negatives, and vice versa. That is why it is important to set up the right metrics. They help teams decide which type of mistake is more acceptable for a specific product and business case.

In this PoC, false negatives were considered more critical than false positives. Missing a dangerous interaction carries a higher risk than generating an extra warning, so recall became particularly important during evaluation.

For healthcare tasks, ML metrics may include:

- Accuracy. Share of correct predictions across all cases.

Example: how often the model correctly classifies a medication combination as safe or potentially harmful. - Precision. Share of correct positive predictions among all predicted positives.

Example: how often a flagged drug interaction is actually harmful, which matters when too many warnings reduce trust. - Recall. Share of detected positives among all real positive cases.

Example: how many truly harmful medication combinations the system successfully identifies. - F1 score. Balance between precision and recall.

Example: a single metric used when both false positives and false negatives matter.

In addition to classification metrics, we also monitored latency and cost per prediction, since the PoC included an LLM-based chatbot component. Response time and expense per answer were practical constraints alongside model quality.

At the same time, business metrics reflect real impact, such as whether users receive timely warnings, whether interaction alerts are understandable, and whether the system increases confidence when managing multiple medications.

These metrics must align. Strong model scores without business impact do not create value. This stage sets the foundation that connects data quality, model performance, and real-world outcomes.

Stage 4. Baseline model development, selection, and validation

Once data preparation is complete, the project moves into model development. At this stage, the team builds a baseline model. This is the simplest version that can solve the task and provide measurable results. In some projects, this baseline later becomes part of the production system.

The exact approach depends on the use case. Some problems do not require custom model training at all. For example, a healthcare product may need an AI chatbot that works with internal documentation or clinical guidelines. In this case, the solution may rely on an LLM with retrieval augmented generation (RAG). The team creates embeddings for documents, stores them in a vector database, and builds a chatbot that retrieves relevant information and produces structured responses.

LLM-based pipelines require a dedicated LLMOps layer. This is covered within our LLMOps services, which address monitoring, iteration, and cost efficiency for large language models.

In our project, the situation was different. We needed a custom model because the system had to classify potential drug–drug interactions and estimate whether a specific combination could be harmful. The number of possible combinations made rule-based logic hard to maintain and even harder to scale. Predefined interaction lists alone were not sufficient, especially when context and dosage variations were introduced, so the visuals had to be generated dynamically based on the selected inputs.

We started with a simple baseline to check whether this approach could produce stable and structurally correct results. At this stage, interpretability was important. We needed to understand how changes in input affected interaction risk prediction, not just whether the output looked acceptable. Based on what we saw, we analyzed data patterns and adjusted the approach as the level of complexity increased.

From that baseline, we looked at how the model behaved across different inputs and combinations. This helped us spot patterns early and understand where the approach held up and where it started to break. We increased model complexity only when the results clearly justified it, rather than adding it upfront.

We also compared the baseline against alternative approaches to see whether they improved the key metrics in a meaningful way. In this process, deeper issues sometimes surfaced. The data could be too noisy, or the overall dataset too limited to support more complex behavior. When that happened, the focus shifted back to data. Since this was an internal PoC, these findings were reviewed internally, and additional datasets were evaluated before continuing further.

Stage 5. AI model deployment to production

By this point, we already had a working version of the system. In this project, it took the form of a functional demo rather than just a PoC. Users could log different medication combinations and receive an assessment of potential drug-drug interaction risk, along with contextual feedback through the chatbot. This was enough to confirm that the idea worked in practice, not just in theory.

Moving this demo into production required a shift in focus. The question was no longer whether the model could classify risky medication combinations, but whether the whole system could run reliably over time. Stability became more important than experimentation.

At this stage, we relied on MLOps practices to bring the solution into a production environment. Our main concern was predictable behavior. The system needed to handle different combinations without breaking, scale with usage, and remain maintainable as new cases appeared. Since the core task involved risk prediction and conversational responses, consistency mattered more than extreme performance tuning.

Production metrics also changed. What mattered was that risk assessments remained stable and consistent across similar inputs, and that chatbot responses stayed coherent and context-aware. We monitored system behavior closely to catch failures early and understand how the model reacted to new or unexpected combinations.

Infrastructure cost became part of the conversation as well. Classification requests and LLM calls can scale quickly with user activity, so we estimated usage patterns and billing behavior before full rollout. This gave the client a realistic picture of how costs might grow with adoption and helped avoid surprises later.

Security and data access received special attention. We followed a minimal-access approach and set up monitoring to spot failures, unusual inputs, or shifts in data patterns that could affect results. This reduced risk and limited the impact of potential failures.

For this project, we used AWS Cloud Services to support production deployment. We chose this setup because of its reliability, flexibility, and the team’s hands-on experience with these tools. It allowed us to integrate the model into a stable environment and scale the system as needed without adding unnecessary complexity.

Monitoring and observability played a critical role at this stage. We tracked system health, logs, and failures to understand how the model behaved once real users started interacting with it. It was important to spot cases where interaction detection failed, produced unstable predictions, or where chatbot responses did not align with the logged medications. Early visibility into these issues allowed us to fix problems before they affected users.

We also paid close attention to incoming data. Some inputs did not match the assumptions made during earlier stages. For example, users could log rare medication combinations or unusual dosage patterns that were underrepresented in the initial dataset. These cases affected output quality and needed careful monitoring. We tracked input distribution and compared it against expected patterns to detect shifts and catch data drift early.

This stage helped keep the system grounded in real usage. Without monitoring, even a well-performing model can degrade over time. Continuous observation allowed us to react quickly, adjust the system, and maintain consistent output quality as the product evolved.

Stage 6. Post-release iterations and model improvement

The project did not stop after the initial release. In fact, this stage became one of the most important parts of the work.

For this product, it made sense to launch with realistic expectations rather than wait for a perfect result. Early versions already supported the core use case and made it possible to gather feedback from real usage.

Post-release work focused on gradual improvement. As users interacted with the system, new data became available. This data helped reveal patterns and edge cases that were not visible earlier, such as rare medication combinations or borderline interaction cases. Based on these inputs, we updated the models and adjusted the approach where needed. In some cases, targeted fine-tuning was enough to improve results without major changes to the overall setup.

Each update followed the same loop. Particular attention was paid to recall and precision balance, since both false alarms and missed interactions directly affected user trust. This helped keep progress measurable and avoided regressions.

This cycle usually repeats over time. Release, observe, adjust, and deploy again. For this project, that approach allowed the system to evolve alongside real usage and changing requirements. Rather than treating AI as a one-time feature, the work must be turned into a system that continues to improve as the product matures.

AI Project Timelines and Cost Ranges for This Case

During this project, one of the questions that came up early was how long the work would take and what level of budget it would require. As is often the case with AI, there was no single fixed answer. Both timelines and costs depended on data readiness, scope decisions, and how much iteration the solution would need along the way.

Since this was an internal PoC, we cannot share exact commercial figures. What we can describe are the initial timeline and cost ranges that were estimated at the beginning of the project. These estimates served as a planning baseline and helped structure the work before implementation began.

Those early numbers shaped expectations within the team. They influenced technical choices, iteration speed, and the order in which problems were addressed. While details evolved as the project progressed, having clear estimates upfront made it easier to manage trade-offs and keep the work grounded in reality.

In the sections below, we break down how we approached timelines and cost estimation for this project, what factors had the biggest impact, and which assumptions mattered most at each stage.

Timelines by stage of the project

AI projects rarely move at a constant pace. Some stages pass quickly. Others take much longer than expected.

- AI PoC. A PoC usually takes 2-6 weeks. Most of this time goes into data work rather than model code. The goal is not a perfect solution but proof that the idea works with real data. The medication interaction project described in this article falls into this category. It was structured and delivered as an internal PoC.

- MVP (Minimum Viable Product). For a small and focused use case, an AI MVP may take 4-6 weeks. For more complex tasks, timelines often reach 6-12+ weeks. Complexity grows with data volume, number of edge cases, and integration depth.

By the way, you can read our step-by-step guide on how to build an MVP and get acquainted with the MVP development checklist to figure out all the nuances.

- Long-term development is a normal part of AI projects. Work does not stop after MVP. In practice, AI systems continue to change over months or even years as new data appears, requirements evolve, and models need updates. This should be expected early, especially in complex domains such as healthcare.

In general, post-MVP AI work rarely requires a full-time team. Most projects move into a maintenance and improvement mode, where a small but regular time investment keeps the system stable and relevant. This often translates into 8-16 hours per week from an ML engineer for monitoring, data review, and incremental updates, with short periods of higher effort when larger changes are required.

In this project, we followed the same pattern. After the initial release, the focus shifted to gradual improvement based on real usage. New data and edge cases led to targeted updates, and at certain points, the effort increased temporarily to address quality or scalability needs.

Read our article if you need some extra information about the differences between MVP, PoC, and Prototype.

A common mistake is to plan AI work as a short, fixed project. In reality, AI behaves more like a long-term capability than a one-time feature.

How timelines translate into cost estimates

Once timelines are clear, it becomes possible to talk about the budget in a more concrete way. AI projects still involve uncertainty, but estimates do not need to stay abstract.

A simple way to think about AI cost is still useful as a starting point:

Estimated cost ≈ total hours × hourly rate

In practice, timelines already depend on how many people are involved. Adding more engineers usually increases cost rather than cutting time, especially at early stages where work cannot be parallelized efficiently. For that reason, estimates make the most sense when team size is defined per stage.

This approach does not cover every nuance of an AI project, but it works well as a starting point, especially at the PoC and MVP stages. In our projects, the AI scope is usually handled by 1 ML engineer, with additional support added only when it clearly makes sense.

Based on this model, rough ranges look as follows:

- AI PoC. Here, the work is usually handled by 1 ML engineer. At this stage, the goal is to validate the approach and work through data-related uncertainty.

With a timeline of 2-6 weeks, PoC effort often falls into the $3,000-17,000 range.

- AI MVP. Here, the scope expands. Integration, testing, and iteration speed start to matter more. In these cases, a 2nd ML engineer may join part-time to support experiments, validation, or specific technical tasks.

A 4-6 week AI MVP with two ML engineers typically falls in the ~$13,000-$34,000 range for the AI scope. For this project, these figures were part of the initial estimates for moving beyond the PoC stage. While the solution remained at the PoC level, the projected MVP range aligned with what we see in similar healthcare AI projects.

- Ongoing AI project support and maintenance. As mentioned earlier, AI work continues after release. This ongoing phase comes with a separate cost that teams should plan for from the start.

For projects like the one described in this article, ongoing AI support typically translates into a monthly budget of around $1,300-4,500, depending on the level of involvement and hourly rate.

These costs cover continued model support and incremental improvements rather than new feature development and help keep the system stable and reliable as usage grows.

Additional cost drivers often include:

- Cloud infrastructure and compute. Infrastructure costs depend on traffic, data volume, and compute intensity. In practice, monthly costs often range from $100 to $10,000+, especially for projects that rely on frequent model inference requests or LLM-based interactions.

- Engineering, QA, DevOps, and product work beyond the AI scope. AI rarely exists in isolation. Backend integration, frontend updates, testing, deployment, and product coordination add to the overall cost beyond the AI scope itself.

- Token usage. For solutions that rely on large language models, token usage directly affects cost. For example, when using Claude Opus 4.6, input tokens cost $5 per million tokens for requests up to 200K tokens, and $10 per million tokens once the input exceeds 200K tokens, which makes long prompts and large context windows a direct cost driver at scale.

In practice, data remains the most unpredictable factor. When data quality is lower than expected or additional data is required, timelines stretch, and budgets grow. This is normal for AI projects and should be planned for early.

In practice, most AI PoCs spend the majority of their effort on data preparation, validation, and iteration rather than model code itself. This project followed the same pattern. This work was included in the overall PoC estimate and did not represent an additional budget line. Depending on the depth of refinement and edge-case handling, the data-related portion accounted for the largest share of the total PoC cost.

To get a rough starting point, you can use our app development cost calculator, which helps translate scope and timelines into an initial budget range.

If you need more accuracy, AI consulting allows a deeper look into data quality, timelines, and technical constraints that affect final pricing.

Let’s develop a great AI product together!